SAN FRANCISCO — “1, 2, 3, 4, we don’t want a robot war!”

The chant echoed outside OpenAI’s headquarters, where a small throng of protesters had gathered on Tuesday to warn of a looming AI surveillance state.

“5, 6, 7, 8, no AI surveillance state!”

A handful of what I assume were OpenAI employees intermittently walked in and out of the building, studiously avoiding eye contact. As bleak as the slogans were, the juxtaposition of the mundane and the apocalyptic had a certain dark comedy to it.

The demonstrators were on hand to protest the dramatic turn of events that saw OpenAI take Anthropic’s place as the United States’ lead vendor for military AI services. On Thursday, the Pentagon said it had notified Anthropic that it considers the company a “supply chain risk,” a designation previously reserved for foreign adversaries.

OpenAI is now reportedly back at the negotiating table with the Pentagon, seeking to add yet more safeguards to its contract after an earlier effort failed to reassure the public. (Anthropic is said to be continuing to negotiate with the military as well.)

The protest was the latest in a wave of anti-AI demonstrations that have taken place around the country. Last week, hundreds marched outside DeepMind, OpenAI, and Meta’s headquarters in an anti-AI protest that organizers said drew up to 500 people. Last month, 200 marched on Virginia’s state capital to protest the data centers being built in the state.

For OpenAI, initial doubts about the strength of the company’s safeguards against the use of its technologies for domestic surveillance and autonomous weapons have metastasized into a full-blown PR crisis. On Tuesday, the company’s dealmaking resulted in a spur-of-the-moment protest that drew about three dozen people to OpenAI’s San Francisco headquarters. (OpenAI did not respond to a request for comment).

Heading into the protest, I wondered what sort of person would show up — and what they would demand. Over the past week, Reddit posts about quitting ChatGPT have been getting tens of thousands of upvotes — and commenters have a pretty broad range of popular talking points, from “You’re now training a war machine. Let’s see proof of cancellation,” to the also very common, “I do like to celebrate any sort of downfall for OpenAI.” People were typing up a storm about the AI bubble, despising Trump, data privacy, protecting artists, and data center water and electricity costs.

Would the OpenAI protesters present as anti-AI Luddites? Hippies worried about data centers’ effect on local water supplies? Quarter-zip-wearing technocrats with a sworn allegiance to Claude?

I took out my notebook and pen, and started introducing myself to strangers.

One organizer I spoke with was an anonymous man wearing a cardboard robot mask, who identified himself as “The Last Friendly Robot.”

I asked him why he was here. Well, he told me, “Who wants mass surveillance? Only the Epstein class.” (Epstein himself was not known as a defender of surveillance, of course — he did his evil deeds on a private island. The robot’s point was that billionaires often build powerful surveillance technologies while simultaneously going to great lengths to maintain their own privacy.)

The robot told me he was even further disturbed by the idea of AI-powered weapons that could “kill with no conscience, and no human in the loop.”

He’d worked in tech, and knew people at the big AI companies, he said. He felt the new contract wasn’t true to the promise of tech: “This isn’t what we came to the Valley to do. We came to build tech that makes people free!”

The robot was echoing a sentiment that rang throughout the protest: that OpenAI’s employees are complicit in something bad. “Quit your job!” — another protest chant — was also written on the street in chalk, one of many colorful drawings protesters were chalking outside of headquarters.

I talked to a young woman named Perrin Milliken, who works at a climate nonprofit. She told me she had been concerned about “data centers, and how they consume so much water and electricity” in local communities.

(While data centers can sometimes strain the supply in water-stressed areas, experts have pointed out that commonly reported statistics about how much water AI uses are too high, sometimes by orders of magnitude. But the electricity issue is real, as evidenced by recent AI lab commitments to pay for the price hikes that otherwise would be borne by consumers.)

While she hadn’t been following the DoD issue closely, Milliken told me, OpenAI’s recent actions concerned her.

“It was scary how quickly they were fine with whatever the government wanted,” Milliken said. She had been pushed to switch to Gemini from ChatGPT by the week’s events. You “can’t be complacent in this moment,” she told me, a moment rife with “very powerful, wealthy people who don’t have the interests of people in mind.”

Most of the people I spoke to at the protest told me they use AI regularly — daily, even. Many said they had recently switched away from ChatGPT. And in peak San Francisco fashion, several told me they hadn’t needed to “QuitGPT” at all: they already had an unshakeable loyalty to Claude.

One of the few true AI abstainers present was Rick Girling, a retired economics and history teacher. He told me he had become concerned about AI after reading Karen Hao’s Empire of AI, a critical history of Sam Altman and OpenAI. Also, he said, “I hate billionaires.” (Several protesters were handing out a petition for taxing billionaires — a measure headed to the California ballot.)

“I hate the notion that people with so much money can tell us what to do, and they’re not accountable,” Girling added. OpenAI’s embrace of the military reminded him of Elon Musk’s takeover of Twitter, he said, and how X’s recommendation algorithm gradually came to steer people to the right. X deserved at least some of the blame for Trump’s re-election, he said.

Surveillance and autonomous weapons felt like another manifestation of the billionaires’ anti-democratic agenda. AI tools concern him because, as he put it, “it’s much easier to kill someone if you don’t see them.”

Girling pointed at a protest sign with a picture of a humanoid robot and told me that he increasingly feared “stuff like this.”

I told Girling that the picture he had just pointed at wasn’t real — it was an AI-generated meme of Sam Altman and Pete Hegseth posing with the Terminator.

He laughed.

“Oh my god,” he said. “It looks so real!”

A software engineer named River Bellamy told me that what most concerns him is the Pentagon’s move to crush Anthropic, which he said OpenAI had been complicit in. Hegseth’s move to retaliate after Anthropic didn’t agree to his terms is “third-world dictator behavior,” he said. “People have the right to say no to a contract.”

(OpenAI has said it objects to Anthropic’s supply chain risk designation. But plenty of protesters told me they nonetheless saw the company’s decision to accept the defense contract as a betrayal.)

Protesters’ worries extended well beyond surveillance and murderbots. David Kreuger, a computer science professor who believes AI could cause human extinction, offered a dire message to his fellow protesters. “It will kill everybody,” he said. “If it doesn’t kill everybody, we will lose power slowly, and we will die slowly.”

Kreuger’s comments underscored the way in which the QuitGPT protesters’ concerns about the effects of AI ran the gamut: massive job loss, environmental concerns, effects on education and human relationships, billionaires’ consolidation of power, government overreach, and even human extinction. Some expressed worries about OpenAI’s increasingly close relationship with the Trump administration; others had less partisan concerns. Some could quote me niche details about OpenAI’s contract with the DoD, while others had only seen a headline or two.

Krueger told me this was exactly what he was interested in forming: a “broad anti-AI coalition.” But as I left the protest, I found myself wondering whether there was really a coalition here at all.

The group gathered outside OpenAI’s office on Tuesday did not present itself as a movement so much as maybe the first draft of one. The people who showed up seemingly all hated the idea that OpenAI might help to normalize military uses of the technology that the company itself had once balked at. Beyond that, though, their politics were all over the place. And it made me doubt how effective the nascent anti-AI movement could be.

Particularly given the massive increase in lobbying that OpenAI and its peers have undertaken as public opinion sours on AI. Pro-AI political action committees have raised almost $200 million to date — more than double the amount that pro-regulation groups have. Meta alone plans to spend $65 million in the midterm elections to support pro-AI candidates — its the most it has ever spent on an election.

And beyond a stated dislike for OpenAI, what did these people really have in common?

Krueger named one common theme: “Nobody has ever wanted killer robots.”

Except that the military does seem to want killer robots, and for the most part AI companies have been eager to help build them. Google still has its DoD contract. Even Anthropic isn’t fully against them — they recently participated in a Pentagon autonomous drone swarm contest. (Dario Amodei’s stated objection to murderbots at this moment is that Claude is not yet reliable enough to operate them.)

To me, this felt like the most uncomfortable aspect of Tuesday’s protest. There is seemingly no one in the current administration, and no AI company, that shares the values of the demonstrators. AI is reshaping the world with only minimal input from many of the people who will be affected most. The protesters felt largely powerless to shape the path of a technology that might result in their surveillance or even their death.

Still, as the protest wound down, I watched people joking with each other, taking pictures of each others’ signs, exchanging contact information. Despite everything, the QuitGPT protesters seemed to be having fun.

For the moment, QuitGPT feels less like a coherent boycott than an early test of whether and how public unease about AI might be turned into organized politics. Anthropic’s clash with the Pentagon and OpenAI’s decision to sign its own deal have given that unease a focal point. The group demonstrating outside OpenAI this week isn’t yet a coalition. But it might give us a hint about how one begins.

On the podcast this week: Kevin and I talk through the latest developments between Anthropic, OpenAI, and the Pentagon. Then, we investigate how prediction markets are making a bad situation even worse in Iran. And finally, the Times’ Arijeta Lajka joins us to discuss the flood of surreal and sometimes disturbing AI slop that YouTube is feeding children through Shorts. Is it Elsagate all over again?

Apple | Spotify | Stitcher | Amazon | Google | YouTube

Sponsored

Understand AI Risk — Misinformation, Malign Influence, and More

Each month, NewsGuard distills its latest AI reporting into a briefing on where and how AI systems fail. From red-teaming breakdowns to deepfakes and manipulated media, it helps organizations understand how AI risks are evolving — and why they matter.

Already trusted by 11,000+ subscribers. See what they’re reading.

Following

The Pentagon declares Anthropic a supply chain risk

What happened: The Pentagon told Anthropic Thursday it has formally deemed the company a supply chain risk, delivering on its threat after negotiations fell apart. The two parties couldn’t agree on contract language regarding safety guardrails to prevent the use of Anthropic’s products in mass domestic surveillance and lethal autonomous weapons.

Defense secretary Pete Hegseth gave military contractors six months to stop using Anthropic’s AI.

As recently as last night, Anthropic CEO Dario Amodei was reportedly still in negotiations with the Defense Department, though.

But a leaked Slack memo from Amodei may have damaged his cause. In it, Amodei called the guardrails OpenAI agreed to with the Pentagon “maybe 20% real and 80% safety theater,” and accused the Trump administration of freezing Anthropic out because “we haven’t given dictator-style praise to Trump” like OpenAI did.

He also blasted OpenAI’s acceptance of the Pentagon’s terms: “the main reason OAI accepted them and we did not is that they cared about placating employees, and we actually cared about preventing abuses.” Much of the Pentagon and OpenAI’s messaging was “just straight up lies,” Amodei wrote.

Late Thursday, Amodei apologized for the memo.

“It was a difficult day for the company, and I apologize for the tone of the post,” Amodei said. “It does not reflect my careful or considered views. It was also written six days ago, and is an out-of-date assessment of the current situation.”

Why we’re following: Claude is already a commonly-used tool in US military systems. It has played a central role in US operations in Iran, most recently helping to analyze vast amounts of data in military strikes.

And seemingly the entire tech industry is pulling for Anthropic here. The Information Technology Industry Council, a big tech industry group which includes Anthropic backers Nvidia and Amazon, and rival OpenAI said it is concerned about the supply chain risk designation.

Anthropic investors have reportedly expressed their support to executives, with the focus of discussions set on avoiding a ban on Anthropic’s tech from Pentagon contractors. But some investors told Reuters they are frustrated at Amodei’s antagonizing of Pentagon officials.

What people are saying: President Trump inserted himself into the narrative. “I fired Anthropic. Anthropic is in trouble because I fired [them] like dogs, because they shouldn’t have done that,” he told Politico.

“Might’ve been that leaked Slack message that pushed it across,” Big Technology’s Alex Kantrowitz wrote on X, responding to a report on the supply chain risk designation.

Lockheed Martin plans to follow the Department of Defense’s Anthropic ban, it said.

OpenAI CEO Sam Altman took jabs at Anthropic at a conference Thursday. It’s “bad for society” if companies start abandoning their commitment to the democratic process because “some people don’t like the person or people currently in charge.”

“The government is supposed to be more powerful than private companies,” Altman said.

Elsewhere, an OpenAI spokesperson said Sam Altman misspoke in saying OpenAI is looking to deploy on all NATO classified networks. Apparently, he meant “unclassified networks.”

—Lindsey Choo

Side Quests

Iran’s state media said Iran did a drone strike on Amazon‘s Bahrain data center because of the company’s support of “US military and intelligence activities.”

A NYT analysis found that an unusual number of Polymarket accounts bet the U.S. would strike Iran the day before the actual strike. While day-before bets were typically rare, over 150 accounts made bets that day. Following an understandable backlash, Polymarket removed some long-running markets speculating on nuclear detonation, including a 2025 contract that had $1.7 million in volume.

Canada says Sam Altman agreed to take immediate steps to strengthen OpenAI’s safety protocols around notifying police about potentially suspicious ChatGPT use. OpenAI previously failed to notify law enforcement about concerning activity from the Tumbler Ridge shooter.

OpenAI launched GPT-5.4, which it says is the “most capable and efficient frontier model for professional work” — and its first with native computer-use capabilities.

OpenAI picked two law firms to prepare for its IPO, in one of its first concrete steps toward a public listing. OpenAI recently started building a GitHub alternative, after engineers had issues with GitHub outages. OpenAI released a Codex app for Windows with native sandboxing and support for developer environments in PowerShell.

OpenAI hit $25 billion annualized revenue by February’s end, up from $21.4 billion at 2025’s end. OpenAI is scaling back shopping directly inside ChatGPT via Instant Checkout, instead having checkouts instead take place in other apps that plug into ChatGPT.

Apple implemented geoblocking in January 2026 to prevent U.S.-based users from downloading or updating ByteDance‘s Chinese apps

A look at why China’s policymakers and public seem more optimistic about AI than the U.S. Part of the answer: Chinese tech companies focus more on real-world applications. And China’s new five-year blueprint introduced an “AI+ action plan” and outlined investments in quantum computing, 6G, embodied AI, and more.

Google, Microsoft, OpenAI, and others signed a pledge at the White House to bear the cost of new electricity generation to power their data centers. The pledge relies on enforcement by local utilities and states via rate deals, without penalties for those refusing to comply.

Elon Musk defended his July 2022 social media posts in a Twitter shareholder trial. Litigants allege he made misleading statements before trying to back out of acquiring Twitter.

A new report suggests Meta sends reviewers in Kenya smart glasses footage that shows “bathroom visits, sex and other intimate moments.” At Meta’s New Mexico child safety trial, Mark Zuckerberg downplayed Meta’s findings on how the company’s apps affect users and teens. Meta agreed to let rival “general purpose AI chatbots” access WhatsApp for a fee in Europe for a 12-month period to avoid potential interim measures from the E.U.

TikTok says it won’t add end-to-end encryption to to DMs. E2EE would prevent police and its safety teams from reading messages, which TikTok argues is needed to protect young users.

A New York bill would ban chatbots from impersonating licensed professionals like doctors and lawyers and giving “substantive response, information, or advice.” A de facto ban on chatbots?

A study showed that ChatGPT Health underestimated the severity of medical emergencies 51.6% of the time and overestimated the severity of non-urgent cases 64.8% of the time.

A new report shows LLMs can find identities of pseudonymous Internet accounts better than traditional methods, finding the real identities 68% of Hacker News profiles. Gulp.

The UK government committed an initial £40 million to establish an lab for “blue-sky” AI research, seeking breakthroughs in science, health care, and transportation for less than the cost of one Meta Superintelligence Labs employee.

Google announced an Android app store program and lower developer fees. They’ve made these changes to comply with new rules in Europe and elsewhere, and resolve Epic’s antitrust litigation.

Google’s Epic settlement term sheet prohibits Epic CEO Tim Sweeney from criticizing Google’s app practices until at least September 2032. In fact, it mandates that he praise Google for their pro-competitiveness. An early candidate for the funniest story of 2026.

Roblox launched “real-time chat rephrasing”, an AI feature that replaces banned words with “more respectful language”, instead of displaying profanities as “####.”

Superhuman‘s Grammarly offers an AI-powered tool called “Expert Review” that simulates feedback from famous writers, dead and living, without their permission. Personally I can’t wait to advise you on your future homework from my grave.

Apple announced the Macbook Neo, a $599 budget laptop that poses a threat to Windows laptops and Chromebooks.

Anthropic said voice mode for Claude Code is now live for about 5% of users. A broader rollout is planned in the coming weeks.

Meta says it hired the engineering team from Atma Sciences, the startup that makes the vibe coding app Gizmo. Meta signed an up to $50 million yearly AI licensing deal with News Corp for UK and US content.

Perplexity signed a multiyear deal with CoreWeave to use dedicated clusters powered by Nvidia Grace Blackwell chips for AI inference.

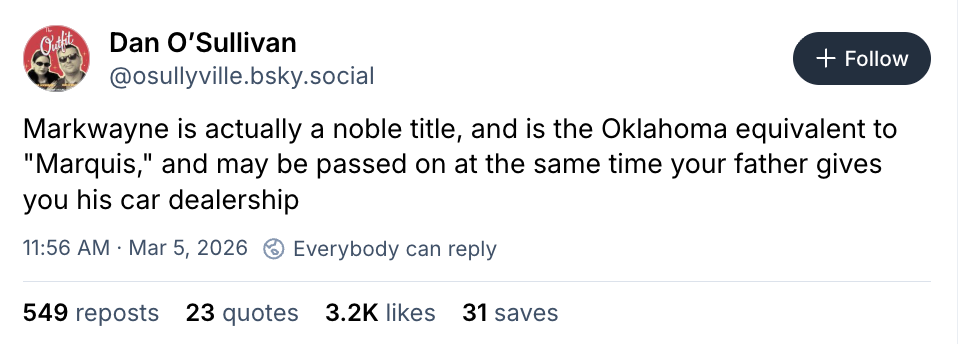

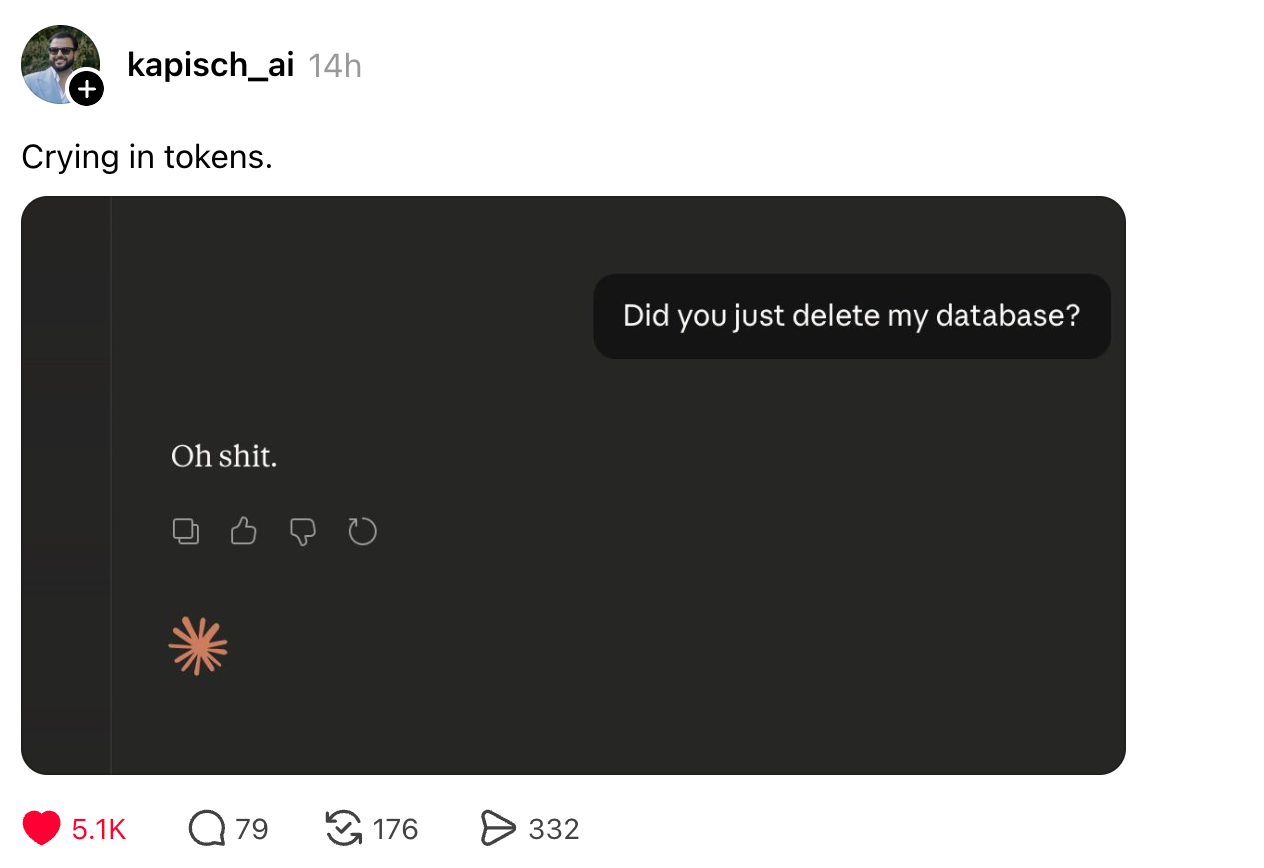

Those good posts

For more good posts every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and AI protests: casey@platformer.news. Read our ethics policy here.